How AI Engineers Actually Write Code: Plan, Review, QA, Repeat

Everyone’s seeing the headlines: teams shipping features in hours, massive PRs generated by AI, entire apps stitched together from prompts.

And yes—AI can generate a lot of code fast.

But what those stories usually don’t show is the part that actually determines whether that code ships cleanly or turns into a mess: the behind-the-scenes engineering work. The planning, the critical review, and the quality assurance that turns “code that exists” into “software that works.”

In this post, I’ll walk you through the workflow that strong teams use when building with AI—what I think of as how AI engineers actually write code.

🚀 Complete JavaScript Guide (Beginner + Advanced)

🚀 NodeJS – The Complete Guide (MVC, REST APIs, GraphQL, Deno)

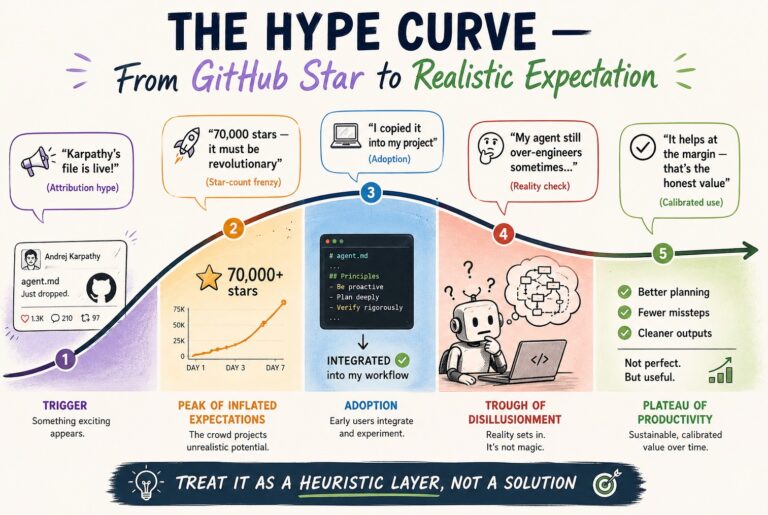

The Myth vs. Reality of AI Coding

The myth: one prompt → production code

The popular mental model is simple:

- Write a great prompt

- AI outputs the feature

- Tests pass

- Ship it

The reality: a loop, not a shortcut

In real engineering environments, the workflow looks more like:

- Plan

- Build (with AI)

- Review

- QA

- Refine

- Repeat

AI accelerates implementation—but it doesn’t replace the engineering process around it.

Step 1: Plan Before the First Prompt (Yes, Really)

Before a skilled engineer gives instructions to an AI agent, they get clear on what they’re building:

- What’s the goal of the feature?

- What are the constraints?

- What edge cases matter?

- What should not happen?

- What tradeoffs are acceptable?

This is where AI can help without writing a single line of code yet: by asking you questions.

Use questions to sharpen requirements

A useful pattern is to run a structured “grilling” session on your idea—forcing clarity before implementation begins. The reason this works is simple: questions reveal assumptions. And assumptions are where bugs are born.

Document decisions in a PRD

Once you’ve clarified the feature, capture it in a PRD (Product Requirements Document)—even if it’s lightweight.

A PRD becomes:

- Your source of truth

- A guide for the agent

- A reference for review

- The basis for QA

In other words: PRDs aren’t “bureaucracy.” They’re leverage.

Step 2: Review the Code the AI Wrote (This Is Where Engineers Level Up)

Here’s a practice that separates people who “use AI” from people who can actually ship with AI:

When the AI finishes, experienced engineers don’t just run tests and merge. They read the code.

Why code review matters more in an AI-first workflow

AI is great at:

- Pulling in libraries

- Wiring components together

- Copying patterns from common examples

But it can also:

- Introduce subtle inconsistencies

- Make assumptions you didn’t ask for

- Implement the “happy path” but miss real-world behavior

- Create design drift across files

Review is where you catch that.

A great insight from how Anthropic engineers work

In a Q&A about how Anthropic engineers code with AI, someone asked a smart question:

Before AI, developers learned by struggling through integrations and implementation details. Now AI handles that—so how do developers keep learning?

The answer: review the AI-generated code and ask questions about the implementation.

That’s not just about correctness—it’s about understanding:

- Why the code is structured this way

- What alternatives exist

- What tradeoffs were made

- What failure modes might appear

Code review becomes a learning loop.

Practical tip: use a second “review agent”

Many teams find it useful to review with a different model/tool than the one that generated the code:

- Agent A writes

- Agent B reviews

A fresh model = fresh perspective.

If you review with the same agent, at least start a new session with a clean context so it can analyze without being biased by the earlier conversation.

Step 3: QA Like a User (Not Like a Developer)

Even if:

- Tests pass

- The PR looks clean

- The architecture makes sense

…something can still be broken in the real experience.

This is where the quality assurance engineer mindset comes in.

“It works on my machine” is easier than ever now

AI-generated code can look polished while still failing in the places users actually live:

- Empty states

- Invalid inputs

- Weird sequences of clicks

- Permissions and auth edge cases

- Performance under realistic usage

Generate a QA plan from your PRD

Remember that PRD? Use it.

Ask your AI to convert the PRD into a QA plan:

- Happy path checklist

- Edge cases

- Error states

- Boundary conditions

- “What should happen if…” scenarios

Then follow the plan step by step like a user seeing the feature for the first time.

Close the loop: refine requirements and rerun

When you find issues:

- Don’t just patch randomly

- Feed the learning back into the PRD

- Clarify requirements

- Rerun the agent with updated constraints

That loop—plan → build → review → QA → refine—is the real workflow.

What the Headlines Miss About “AI Productivity”

You’ll keep hearing stories like:

- “We shipped 10x more code.”

- “We built features in hours.”

- “AI wrote most of the app.”

But what you don’t see in those stories is the hidden work that made it possible:

- Careful feature planning before prompts

- Thorough code review after generation

- Hands-on QA to ensure real behavior

- Iteration and refinement cycles

The output is visible. The engineering process isn’t.

Key Takeaways

- AI coding isn’t prompt engineering—it’s engineering.

- Plan first. The quality of your requirements determines the quality of the output.

- Write a PRD. Even lightweight documentation creates leverage for build, review, and QA.

- Review AI code like you would review a teammate’s PR. This is also how you keep learning.

- Adopt a quality assurance engineer mindset. Tests passing isn’t the same as the feature working.

- Ship via a loop: plan → build → review → QA → refine.

Conclusion: AI Writes the Code, But You Ship the Software

AI makes writing code cheaper and faster. But it doesn’t remove the work that turns code into a reliable product.

The best teams aren’t just prompting faster—they’re planning clearly, reviewing critically, and validating thoroughly.

That’s how AI engineers actually write code.